CMUSphinx is a project of the week on Sourceforge

Sourceforge done some wrong steps, but now is is getting definitely better. And they provide a crazy slow, but still a very valuable hosting for us.

Nice thing is that CMUSphinx has been selected of the project of this week. Congratulations to the team!

As a bonus, we have uploaded a new G2P Seq2Seq model and added an acoustic model to for one of the most beautiful languages in the world, Italian!

Should you select Raspberry Pi 3 or Raspberry Pi B+ for CMUSphinx

This is an interesting, a very important question for our users. Embedded software is one of the core use cases, nobody cares about Intel these days, everyone needs to run on small efficient ARM and MIPS devices. Microsoft also chases the race. Raspberry Pi is a first choice here. But there are many models available, so you might wonder which one do you need to choose for your application.

Luckily, our user Alan McDonley has recently published an evaluation of Raspberry Pi 3 and Raspberry Pi B+ for common speech recognition tasks. Of course this report misses some details like it doesn't really tune the performance of recognizer and it doesn't cover the very important keyword spotting mode, the primary mode for devices like Pi.

Please check it out on Element14.

See the video too

Raspberry Pi 3 Speech Recognition Road Test from Alan McDonley on Vimeo.

We actually are very interested in performance evaluations on various devices and very much need your help here. Of course we can not obtain any software around, so results, logs, comments would be very appreciated!

Grapheme-to-phoneme tool based on sequence-to-sequence learning

Recurrent neural networks (RNN) with long short term memory cells (LSTM) recently demonstrated very promising performance results in language modeling, machine translation, speech recognition and other fields related to sequence processing. Nice thing is that the system is almost plug and play, you feed any inputs and get a decent accuracy.

We released a grapheme-to-phoneme toolkit based on sequence-to-sequence encoder-decoder LSTM for machine translation task. It is already successfully used by Microsoft and Google for the task of grapheme-to-phoneme conversion. The great thing in this approach is ultra simplicity. One LSTM is used to encode character sequences in continuous space and another LSTM decodes the phoneme sequence with attention mechanism. Interestingly, the training does not require phoneme and grapheme alignments as in conventional WFST approaches, it simply learns from the data.

This implementation is based on TensorFlow which allows an efficient training on both CPU and GPU.

The code is available in CMUSphinx section on github.

You can download an example model with 2 hidden layers and 64 units per layer trained on CMU dict for generating pronunciations of new words and use it in the following simple way:

python g2p.py --decode [your_wordlist] --model [path_to_model]

In addition, you can make new G2P models using any existing dictionary.

python g2p.py --train [train_dictionary.dic] --model [output_model_path]

The tool allows to select various training parameters. Feel free to experiment with the number of parameters and learning rates. Training speed is not fast, it takes about 1 day to train a large model, but it should be faster with GPU.

We are still testing accuracy of the model, but it seems that it is comparable with Phonetisaurus tool. Small model with hidden layer size 64 performs slightly worse, but is very small (500kb), large model with 512 elements in hidden layer is slightly more accurate.

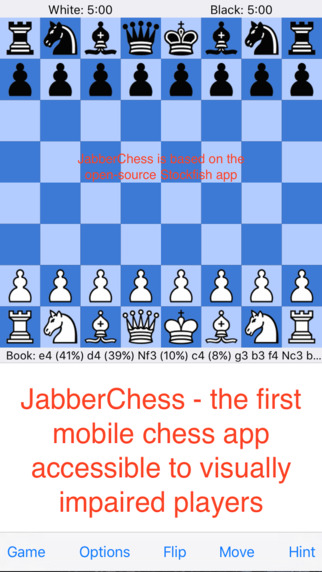

JabberChess, A Mobile Chess App Speech Recognizer for Visual and Motion Impaired Users

Jackson Chen, a high-schooler from Colorado, US has recently made available a chess application controlled by voice created with CMUSphinx. You can find it in AppStore.

It is a very interesting case of CMUSphinx used in real-life application also because Jackson has created complete report of his experience creating the chess application and shared the models he created in the process. He performed a huge work on testing the application in real-life. He compared the performance of CMUSphinx grammars, language models and he also explored recognition with commercial engine from Nuance. You could easy guess which engine provided the best accuracy, if you want to learn more, please check the full report. You can find the models here.

If you have created your application with CMUSphinx, please let us know!